Research

Qwen 3.5: Open-source Multimodal AI Models with MoE Architecture and 201 Languages

Discover Qwen 3.5, Alibaba’s family of open-source AI models that rivals GPT-5 and Claude. MoE architecture, impressive performance, and 201 languages.

In early February 2026, Alibaba shook the world of artificial intelligence by unveiling Qwen 3.5, a complete family of vision-language models (VLM), pushing the boundaries of what was thought possible with open-source models. As the race for AI agents intensifies, this new generation of multimodal models could well be a game-changer for developers and businesses worldwide.

Qwen3.5🚀 pic.twitter.com/qDwkQRtnUu

— Qwen (@Alibaba_Qwen) February 16, 2026

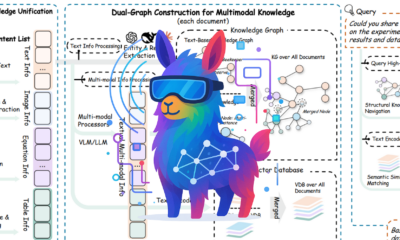

An Architecture that Revolutionizes the Efficiency of AI Models

The true tour de force of Qwen 3.5 lies in its hybrid architecture. Unlike traditional models that activate all their parameters, Qwen 3.5 adopts a Mixture-of-Experts (MoE) approach that only utilizes a fraction of its capabilities for each query. Take the flagship model Qwen3.5-397B-A17B, with its 397 billion parameters, it only activates 17 billion during each inference. This technical feat allows for cutting-edge performance while drastically reducing computation costs.

The architecture combines Gated Delta Networks, a form of linear attention, with classic attention blocks in a 3:1 ratio. This hybridization allows the model to maintain a constant memory footprint while preserving the precision required for complex reasoning. This enables context windows of up to 1 million tokens, a capability that until recently seemed reserved for high-end server infrastructures.

Performance that Shakes the Competition

The benchmarks speak for themselves. The Qwen3.5-35B-A3B, with only 3 billion active parameters, surpasses its predecessor Qwen3-235B, which activated 22 billion. Even more impressively, this mid-sized model compares favorably to OpenAI’s GPT-5.2 and Anthropic’s Claude Opus 4.5 on certain tasks.

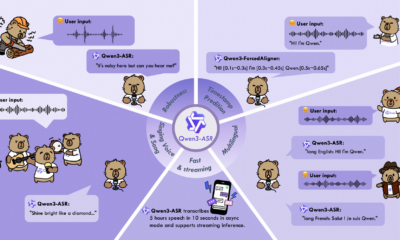

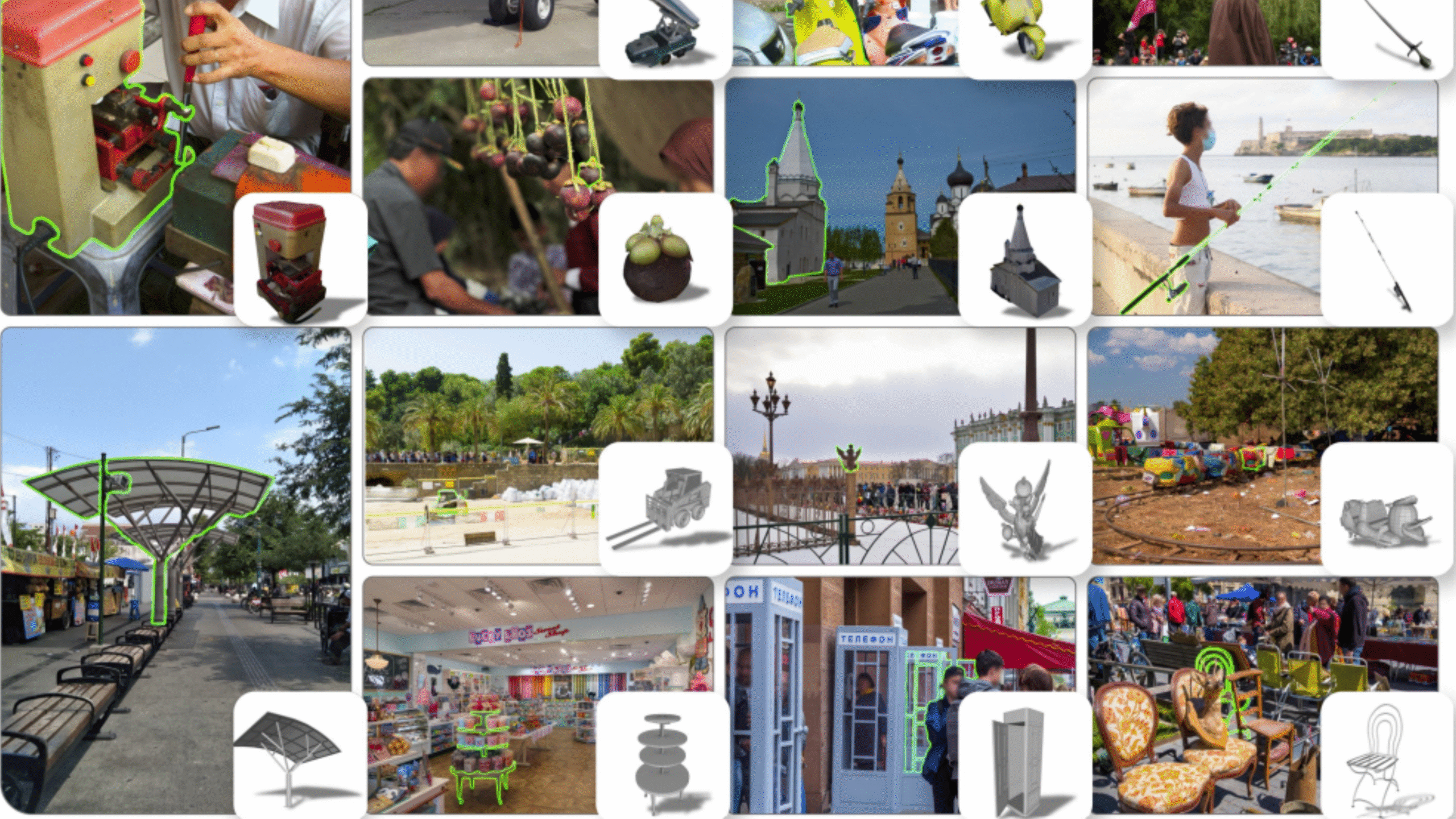

All Qwen 3.5 models are natively multimodal, capable of simultaneously processing text, images, and videos without requiring separate modules. This unified approach, combined with support for 201 languages and dialects, makes Qwen 3.5 a truly global solution for international developers.

A Complete Range to Meet All Needs

Alibaba has deployed a particularly thoughtful product strategy with 9 open-source models released (without variations, and 17 with), covering all possible use cases. At the top of the pyramid, the flagship 397 billion parameter model targets the most demanding applications. The Qwen3.5-122B-A10B targets server infrastructures with 80GB GPUs, while the Qwen3.5-35B-A3B finds the perfect balance for running on consumer hardware with 32GB of VRAM.

The Qwen3.5 27B stands out for its advanced optimization, supporting a context window of over 800K tokens while remaining accessible on standard hardware. For production needs, Qwen3.5-Flash offers a hosted version with 1 million default context tokens and integrated tools, all at an extremely competitive API price compared to Western players.

The small model series completes the ecosystem with four sizes (0.8B, 2B, 4B, and 9B), all natively multimodal and capable of handling 262K context tokens. These compact models pave the way for deploying AI on edge devices and consumer hardware, thereby democratizing access to advanced AI capabilities.

Qwen 3.5, Multimodal Models Designed for Agentic Workflows

Unlike models designed primarily for conversation, Qwen 3.5 was conceived from the outset for agentic workflows—autonomous AI systems capable of planning, executing multi-step tasks, and interacting with real interfaces.

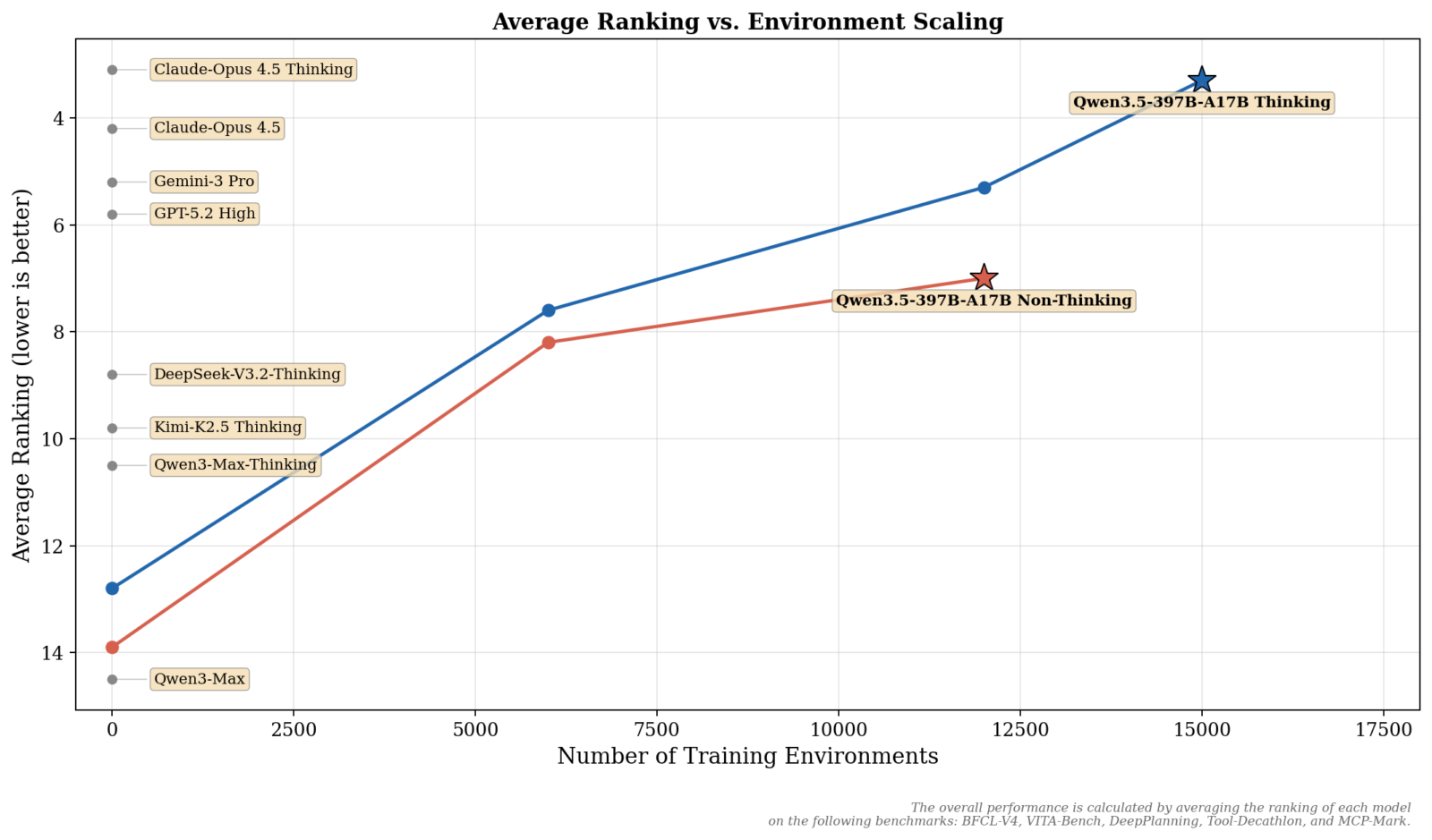

Reinforcement learning training has been massively expanded. This approach prioritizes generalization and complexity rather than narrow optimization on specific benchmarks. The result? Models that natively know how to use tools, perform web searches, interpret code, and seamlessly integrate with major agentic frameworks, including compatibility with OpenClaw, Claude Code, and leading development platforms.

The default “thinking” mode allows the model to generate an internal chain of reasoning before providing its final answer, thereby improving the reliability and traceability of decisions. Coupled with an architecture that maintains performance over ultra-long contexts, Qwen 3.5 can analyze complete code repositories or process massive documents without resorting to complex chunking strategies.

A New Family of Open-Source Models

The arrival of Qwen 3.5 marks a significant turning point in the AI landscape. By offering open-source models under the Apache 2.0 license that rival proprietary solutions from American giants, Alibaba is democratizing access to cutting-edge AI capabilities. The gap between proprietary and open-source models continues to narrow, and Chinese laboratories play a central role in this convergence.

For businesses and developers facing cost, latency, or data sovereignty constraints, Qwen 3.5 represents a credible and high-performing alternative. The promise of frontier-level reasoning at a fraction of the cost is no longer theoretical. With a complete range from the tiny model of 0.8B to the 397B behemoth, all sharing the same architecture and all natively multimodal, Qwen 3.5 offers a consistency rarely seen in the industry.